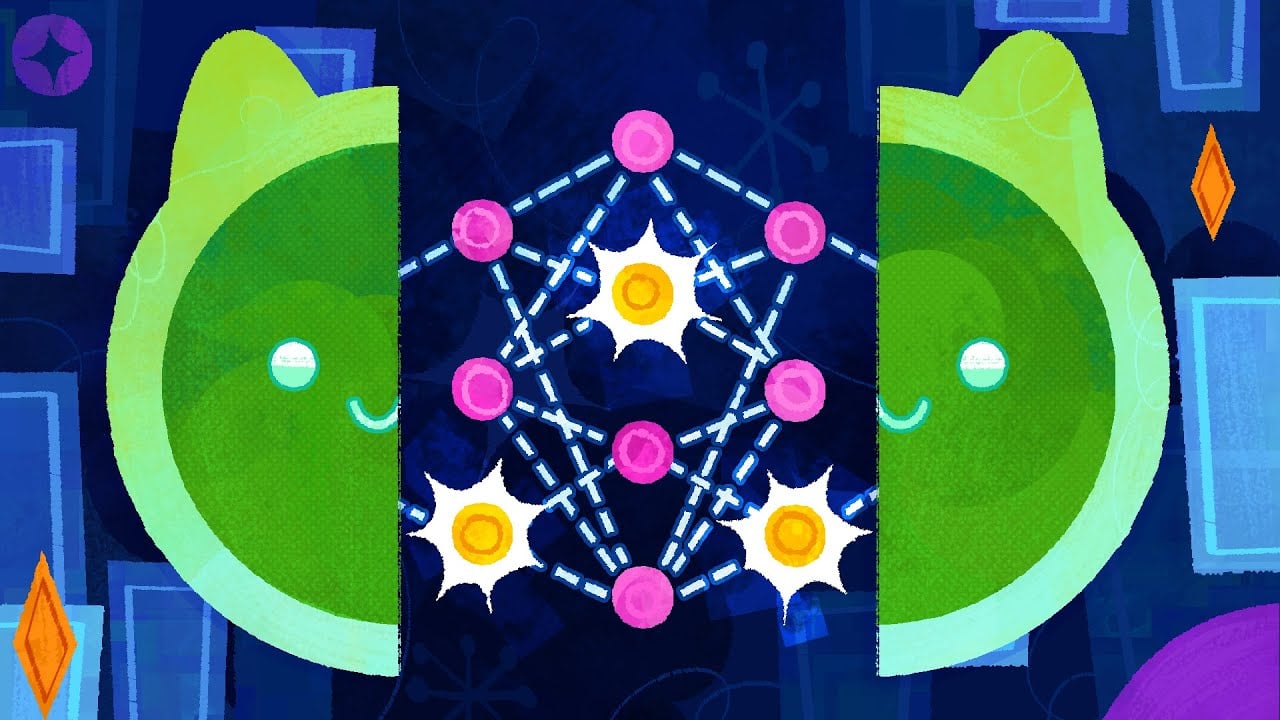

Neural networks have become increasingly impressive in recent years, but there’s a big catch: we don’t really know what they are doing. We give them data and ways to get feedback, and somehow, they learn all kinds of tasks. It would be really useful, especially for safety purposes, to understand what they have learned and how they work after they’ve been trained. The ultimate goal is not only to understand in broad strokes what they’re doing but to precisely reverse engineer the algorithms encoded in their parameters. This is the ambitious goal of mechanistic interpretability. As an introduction to this field, we show how researchers have been able to partly reverse-engineer how InceptionV1, a convolutional neural network, recognizes images.

They absolutely do not learn and we absolutely do know how they work. It’s pretty simple.

https://jasonheppler.org/2024/05/23/i-made-this/

Yes, but the tokens are more than just a stream of letters, and aren’t saved in the form of words. The information itself is organized into conceptual proximity to other concepts (and distinct from the text itself), and weighted in a way consistent with its training.

That’s why these models can use analogies and metaphors in a persuasive way, in certain contexts. Mix concepts that the training data has never been shown before, and these LLMs can still output something consistent with those concepts.

Anthropic played around with their own model, emphasizing or deemphasizng particular concepts to observe some unexpected behavior.

And we’d have trouble saying whether a model “knows” something if we don’t have a robust definition of when and whether a human brain “knows” something.